One of the many minutiae that concerns me is bandwidth consumption. The fact of the matter is that the internet is not a particularly forgiving means of conveying data from one place to another. It is merely *the* means for conveying data. It is what we have to work with; everyone with a different connection.

Some poor souls are forced to use dial-up, way out there, in the middle of nowhere, and others are privileged with fiber optic connections (we could use a visit, Google). In the middle are the broadband users, with varying capability, via DSL or cable.

|

| A 'D-' for my perfectly usable connection. It's only near-failing if the application in question is failing the user. |

You can see here that my home cable connection has a bandwidth of roughly half a megabyte downstream and 100k/sec upstream. Nobody reads/writes/sends/receives anything in bits (except for programmers), so I like to look at these things in terms of bytes, because they are infinitely more relevant to me (and you). You can see that my connection's score is a 'D-'. I could see that if my priorities involved watching 1080p video. Instead, I'd give my connection a 'B+' because it is something I almost never have to think about, it is plenty fast for my needs. I'd give it an 'A' if it weren't for the random outage that occurs once every few months for an hour or two.

The reality is that it's not the connection that matters, it's how the application uses the connection, and what the end-user's experience is. It makes no difference how I obtain the experience, via 56k or 1-Gbps fiber, as long as the experience is 'A' worthy. Even the newest consumer GPUs are brought to a crawl by games made by those who have no idea what they are doing. This doesn't mean the GPU isn't up to snuff, it means the game designer is doing gamers a disservice by not taking a realistic idea of common hardware configurations into consideration, especially if they took their money for it.

My strategy with BITPHORIA is to make something new, and interesting, that takes advantage of newer hardware capabilities to perform novel rendering, without requiring the most up-to-date setup. Being a multiplayer game, this applies to a player's internet connection as well.

If I can support the vast majority of the existing configurations out there, then that maximizes the potential player base, which translates to customers. Primarily, though, I don't want to leave anybody out. I want the high-end gaming rig players to be happy with their investment, and I also want the newbies on netbooks to be able to enjoy a rousing session of BITPHORIA.

I don't want people to be forced to play on large fiber-connected servers. I want a newbie with a netbook on a wifi connection be be able to host a game server, that can host at least a few players. Even a 'low-end' broadband connection like my own only has only a 100kb/sec upstream, which could easily be saturated if I were to host a server running any popular FPS game with 8 players. In order to make this possible there must be a minimal amount of data traversing the network connections between the server and player clients.

Naturally there are several strategies for minimizing bandwidth usage when conveying a game state across a network connection. Quantizing, or 'bit-packing' various data based on its type and behavior is one extremely important method. Typically, values for angles/orientation/etc are represented and dealt with as floating-point values (or double-precision, if your application demands it). Floating points values (aka 'floats') are 32-bits, and sometimes only a small range of their capable range is used. For instance, in a game, you may have objects with velocities that never go above a certain speed. This knowledge can be used to effectively remove the extra unused-bits from velocity information about an object.

Another strategy is avoiding sending redundant data, and only send certain properties when they change, instead of re-sending the same information over-and-over. This applies to things like an object's position in the game, and orientation/angles. If the object is stationary, there is no need to send this information about it.

Another issue that comes up is the game's network update rate. The update rate, in most client/server games, must be as high as possible without putting too much strain on the server or client connections. With lower update rates the game can begin to feel a little sloppy, especially to gamers who have acquired a fine sense for such things. I've seen game servers with their update rates so high that some player connections couldn't keep up. This is just plain unacceptable. Some games keep their update rates really low because they are sending too much data per-update to be able to have it any higher without making the game unplayable for slower connections.

Keeping a low update rate is another possible strategy, and needs fine tuning alongside other important aspects of the networking that handles interpolation and extrapolation, and maintaining the game simulation's fidelity.

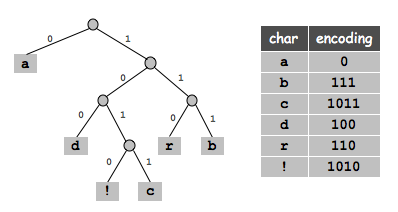

Compressing the network data on it's way in/out before actually sending it is the strategy I am currently working to employ in BITPHORIA. My initial plan was to just follow suit with Quake3's use of a static-Huffman encoding, which breaks down as a simple method of re-assigning byte values a new binary code, where more frequently appearing values are represented using a smaller bit code, and less frequent values use a larger bit code. This is a form of entropy coding.

With entropy encoding it's all about exploiting the known likelihood that a byte will be a certain value. This is orthogonal to dictionary encoders, which operate on exploiting the fact that there are usually repeating-patterns in data. Entropy encoding doesn't care where the values are in the stream, they can be clumped together next to like-values, or spread evenly, and the output will be the same size as long as there are the same number of each possible value. Dictionary encoders typically produce a much better compression than entropy encoders, but are much slower. They also do not operate well on small pieces of data, and are better equipped to compress large data.

| |

|

There are two major entropy encoding algorithms that exist, Huffman coding, and arithmetic/range coding. The deal here is that Huffman can be reduce to, as I mentioned above, a simple exchange of byte values for bit codes to be output. This works well as a simple array/table look up in code. Arithmetic/range coding lends itself to better compression ratio, because the resulting bitcodes generated more closely suit the probabilities of each possible value, and therefore produces output that is closer to the actual informational content of a piece of data. The catch is that arithmetic/range encoding is more CPU intensive.

|

| Range coding represents data as a single value generated by recursively narrowing down each symbol in it's "range". |

Now, to be honest, I could probably get away with simply using either, and nobody would know the difference. This is where my neuroses comes into play. If I can do better, I will do better. So after some research I saw potential in the idea of using arithmetic coding, specifically range-encoding, which is the integer-based version of arithmetic coding.

After a day I came up with my very own entropy encoding, which was essentially a bastardized hybrid of Huffman and range encoding combined. Without an academic background in math, I was simply fumbling around, hoping to stumble across a discovery. The goal was to produce the speed of Huffman encoding with the higher precision of range encoding. The end result, dubbed "binary-search encoding" has roughly the speed of Huffman, with neither the compression ratio of Huffman or range encoding. So that was basically a failure. I was able to compress a 512 kilobyte sample of BITPHORIA's networking data down to 405kb. So that was a compression ratio of ~1.26, whereas a simple Huffman encoder can get the same data down to 341kb, a ratio of ~1.5. My binary-search encoding was not gonna fly, at least not in this situation.

Arithmetic coding does the same as, or better than, Huffman, because Huffman is essentially a special case of arithmetic encoding where value probabilities are powers of two. This is why it cannot achieve an encoding that is closer to the actual informational content of a piece of data.

During my research to better understand range encoding, and why it works as well as it does, I was hoping to incorporate these principles into my little algorithm to get better compression than Huffman, even if it wasn't as good as true range encoding. This is when I stumbled across Asymmetric Numeral Systems, and Finite State Entropy. A new algorithm recently developed and even more recently made to be as fast as Huffman encoding, with the compression of range/arithmetic encoding.

ANS captures the raw essence of arithmetic coding, without the convoluted means of obtaining such an encoding. At the end of the day the system breaks down encoding and decoding into a table of bitcodes for each possible byte value, just like an optimized Huffman implementation does. The end result, though, is a better choosing of bitcodes for byte values by maintaining an internal 'state' from which encoding a symbol into some bits yields a new 'state' for the next one.

My initial attempt that utilized a binary search was flawed in that it had to 'start from scratch' with each symbol that was to be encoded. There was no internal state being maintained, and each symbol was treated as a lone isolated incident without any context. ANS maintains this context, which allows it to utilize less bits for encoding/decoding.

My initial attempt that utilized a binary search was flawed in that it had to 'start from scratch' with each symbol that was to be encoded. There was no internal state being maintained, and each symbol was treated as a lone isolated incident without any context. ANS maintains this context, which allows it to utilize less bits for encoding/decoding.

If you enjoy compression and information theory, please explore these links!

Links:

RealTime Data Compression - Finite State Entropy - FastCompression.blogspot.com

Asymmetric Numeral System - EZCodeSample.com

Simple and fast Huffman Coding - CodeProject.com

No comments:

Post a Comment